Part of the Physical AI Series

This article builds on earlier discussions in the Physical AI series, which explores how intelligent systems interact with the physical world and how they are structured in real-world deployments.

As Physical AI moves from concept to real-world deployment, one of the most important questions is how these systems are actually structured.

While use cases vary across industries, most Physical AI implementations follow a common architectural pattern. Physical AI is not a single product or model. It is a system made up of interconnected layers that work together to sense, interpret, and act on the physical world.

This article introduces the Physical AI stack as a practical framework and explains how each layer contributes to building scalable, production-ready systems.

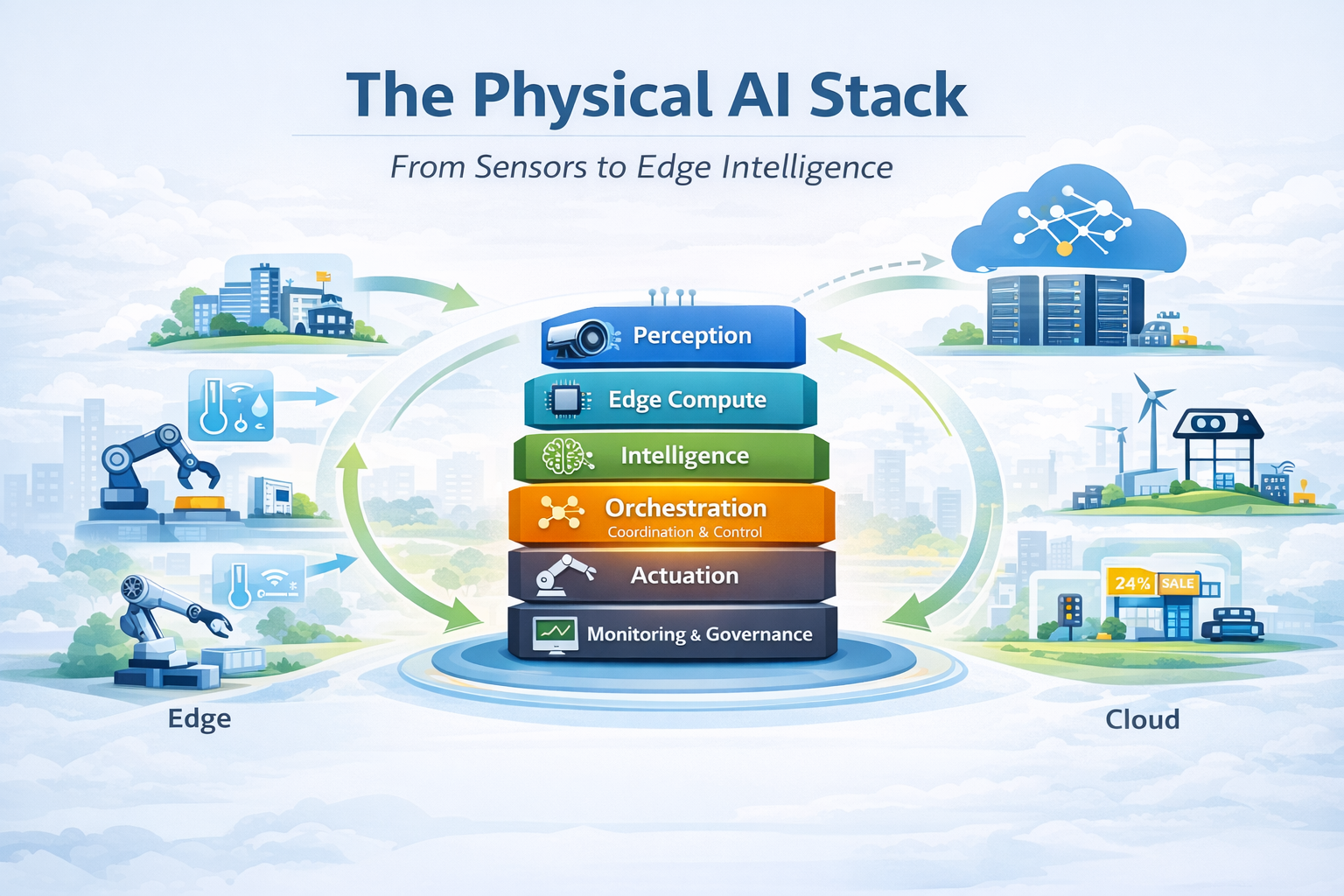

At a high level, the Physical AI stack can be understood through six core layers:

These layers appear consistently across industries, regardless of whether the system is deployed in buildings, manufacturing environments, infrastructure, or retail operations.

The stack begins with perception, where signals are captured from the physical environment. This may include sensors, cameras, or machine data, all of which translate real-world conditions into digital inputs.

These inputs are processed through edge compute, which enables real-time responsiveness close to where data is generated. This layer reduces latency and allows systems to operate even in environments with limited connectivity.

On top of this sits intelligence, where rules and models are applied to interpret data. This is where decisions are made, whether through predefined logic, AI inference, or a combination of both.

Connecting everything together is orchestration. This layer determines how decisions are applied across systems, coordinating inputs and outputs while managing dependencies and workflows. It is also the layer that allows systems to evolve over time without being completely redesigned.

Once decisions are made, they are executed through actuation, where physical or operational changes occur. This may involve controlling machines, adjusting building systems, or triggering workflows and alerts.

Finally, monitoring and governance provide visibility into how the system performs. This layer ensures that systems remain reliable, auditable, and manageable over time.

How the Layers Work Together

Although the stack is often presented as a vertical structure, it operates as a continuous loop.

Signals flow from perception into processing and decision-making, are coordinated through orchestration, and result in actions that affect the physical world. These outcomes are then observed again, creating a continuous cycle of feedback and adjustment.

What differentiates successful Physical AI systems is not just the presence of these layers, but how effectively they operate together as a unified system.

Among all layers, orchestration plays a central role in determining whether a Physical AI system can scale.

In many early deployments, systems rely on hard-coded logic and point-to-point integrations. While this may work for initial demonstrations, it quickly becomes difficult to maintain as complexity increases.

Orchestration addresses this by providing a central layer where logic, workflows, and system interactions can be defined and managed. This allows Physical AI systems to remain flexible, adapt to new inputs, and scale across environments.

In practice, orchestration is often the difference between a proof-of-concept and a production-ready system.

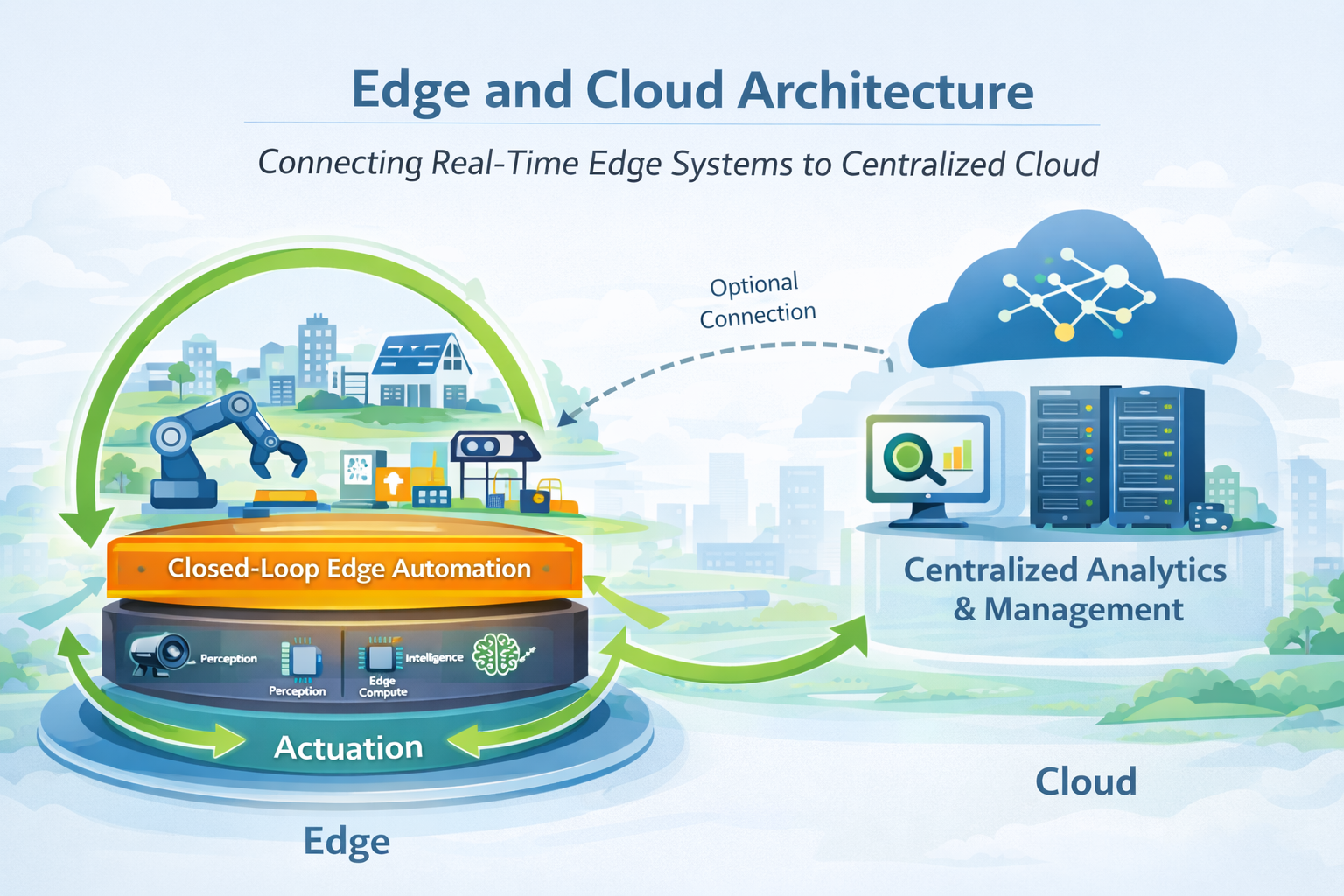

Physical AI systems rarely operate entirely in the cloud or entirely at the edge. Instead, they are typically deployed as hybrid systems.

Edge compute handles real-time decision loops and ensures systems remain responsive even when connectivity is limited. The cloud, on the other hand, supports analytics, long-term data storage, and coordination across multiple sites.

Understanding how to distribute responsibilities between edge and cloud is an important architectural decision, and one that becomes more critical as systems scale.

Viewing Physical AI through a layered framework changes how systems should be designed.

It encourages a shift away from isolated components toward integrated systems. It also highlights the importance of designing for adaptability, where logic and workflows can evolve without requiring complete system redesign.

Most importantly, it reinforces that value is created not by individual technologies, but by how effectively they are connected and orchestrated.

Within this architecture, the orchestration and edge layers are where Physical AI systems become operational.

Gravio is designed to operate across these layers, enabling coordination between sensors, systems, and actions. It bridges IT and OT environments, allowing enterprise systems, physical infrastructure, and AI models to work together within a unified framework.

By supporting distributed, real-world deployments, Gravio enables Physical AI systems to move beyond isolated implementations and scale into production-ready environments.

Closing Perspective

The Physical AI stack provides a practical way to understand how intelligent systems interact with the physical world. While specific technologies may vary, the underlying structure remains consistent across use cases.

By approaching Physical AI as a layered system, organizations can design solutions that are not only functional, but also adaptable and scalable over time. This sets the stage for the next discussion, where we examine why Physical AI systems increasingly rely on edge-first architectures to meet real-world requirements.